Soil Tutorial¶

Introduction¶

This notebook is an introduction to the soil agent-based social network simulation framework. It will focus on a specific use case: studying the propagation of disinformation through TV and social networks.

The steps we will follow are:

- Cover some basics about simulations in Soil (environments, agents, etc.)

- Simulate a basic scenario with a single agent

- Add more complexity to our scenario

- Running the simulation using different configurations

- Analysing the results of each simulation

The simulations in this tutorial will be kept simple, for the sake of clarity. However, they provide all the building blocks necessary to model, run and analyse more complex scenarios.

But before that, let’s import the soil module and networkx.

[64]:

from soil import *

from soil import analysis

import networkx as nx

import matplotlib.pyplot as plt

Basic concepts¶

There are two main elements in a soil simulation:

- The environment or model. It assigns agents to nodes in the network, and stores the environment parameters (shared state for all agents).

soil.NetworkEnvironmentmodels also contain a network topology (accessible through throughself.G). A simulation may use an existing NetworkX topology, or generate one on the fly. TheNetworkEnvironmentclass is parameterized, which makes it easy to initialize environments with a variety of network topologies. In this tutorial, we will manually add a network to each environment.

- One or more agents. Agents are programmed with their individual behaviors, and they can communicate with the environment and with other agents. There are several types of agents, depending on their behavior and their capabilities. Some examples of built-in types of agents are:

- Network agents, which are linked to a node in the topology. They have additional methods to access their neighbors.

- FSM (Finite state machine) agents. Their behavior is defined in terms of states, and an agent will move from one state to another.

- Evented agents, an actor-based model of agents, which can communicate with one another through message passing.

- For convenience, a general

soil.Agentclass is provided, which inherits from Network, FSM and Evented at the same time.

Soil provides several abstractions over events to make developing agents easier. This means you can use events (timeouts, delays) in soil, but for the most part we will assume your models will be step-based o.

Modeling behaviour¶

Our first step will be to model how every person in the social network reacts to hearing a piece of disinformation (news). We will follow a very simple model based on a finite state machine.

A person may be in one of two states: neutral (the default state) and infected. A neutral person may hear about a piece of disinformation either on the TV (with probability prob_tv_spread) or through their friends. Once a person has heard the news, they will spread it to their friends (with a probability prob_neighbor_spread). Some users do not have a TV, so they will only be infected by their friends.

The spreading probabilities will change over time due to different factors. We will represent this variance using an additional agent which will not be a part of the social network.

Modelling Agents¶

The following sections will cover the basics of developing agents in SOIL.

For more advanced patterns, please check the examples folder in the repository.

Basic agents¶

The most basic agent in Soil is soil.BaseAgent. These agents implement their behavior by overriding the step method, which will be run in every simulation step. Only one agent will be running at any given time, and it will be doing so until the step function returns.

Agents can access their environment through their self.model attribute. This is most commonly used to get access to the environment parameters and methods. Here is a simple example of an agent:

class ExampleAgent(BaseAgent):

def init(self):

self.is_infected = False

self.steps_neutral = 0

def step(self):

# Implement agent logic

if self.is_infected:

... # Do something, like infecting other agents

return self.die("No need to do anything else") # Stop forever

else:

... # Do something

self.steps_neutral += 1

if self.steps_neutral > self.model.max_steps_neutral:

self.is_infected = True

Any kind of agent behavior can be implemented with this step function. dead, it has two main drawbacks: 1) complex behaviors can get difficult both write and understand; 2) these behaviors are not composable.

FSM agents¶

One way to solve both issues is to model agents as `Finite-state Machines <https://en.wikipedia.org/wiki/Finite-state_machine>`__ (FSM, for short). FSM define a series of possible states for the agent, and changes between these states. These states can be modelled and extended independently.

This is modelled in Soil through the soil.FSM class. Agents that inherit from soil.FSM do not need to specify a step method. Instead, we describe each finite state with a function. To change to another state, a function may return the new state, or the id of a state. If no state is returned, the state remains unchanged.

The current state of the agent can be checked with agent.state_id. That state id can be used to look for other agents in that specific state.

Our previous example could be expressed like this:

class FSMExample(FSM):

def init(self):

self.steps_neutral = 0

@state(default=True)

def neutral(self):

... # Do something

self.steps_neutral += 1

if self.steps_neutral > self.model.max_steps_neutral:

return self.infected # Change state

@state

def infected(self):

... # Do something

return self.die("No need to do anything else")

Async agents¶

Another design pattern that can be very useful in some cases is to model each step (or a specific state) using asynchronous functions (and the await keyword). Asynchronous functions will be paused on await, and resumed at a later step from the same point.

The following agent will do something for self.model.max_steps and then stop forever.

class AsyncExample(BaseAgent):

async def step(self):

for i in range(self.model.max_steps):

self.do_something()

await self.delay() # Signal the scheduler that this agent is done for now

return self.die("No need to do anything else")

Notice that this trivial example could be implemented with a regular step and an attribute with the counts of times the agent has run so far. By using an async we avoid complicating the logic of our function or adding spurious attributes.

Telling the scheduler when to wake up an agent¶

By default, every agent will be called in every simulation step, and the time elapsed between two steps is controlled by the interval attribute in the environment.

But agents may signal the scheduler how long to wait before calling them again by returning (or yielding) a value other than None. This is especially useful when an agent is going to be dormant for a long time. There are two convenience methods to calculate the value to return: Agent.delay, which takes a time delay; and Agent.at, which takes an absolute time at which the agent should be awaken. A return (or yield) value of None will default to a wait of 1 unit of time.

When an FSM agent returns, it may signal two things: how long to wait, and a state to transition to. This can be done by using the delay and at methods of each state.

Environment agents¶

In our simulation, we need a way to model how TV broadcasts news, and those that have a TV are susceptible to it. We will only model one very viral TV broadcast, which we will call an event, which has a high chance of infecting users with a TV.

There are several ways to model this behavior. We will do it with an Environment Agent. Environment agents are regular agents that interact with the environment but are invisible to other agents.

[65]:

import logging

class EventGenerator(BaseAgent):

level = logging.INFO

async def step(self):

# Do nothing until the time of the event

await self.at(self.model.event_time)

self.info("TV event happened")

self.model.prob_tv_spread = 0.5

self.model.prob_neighbor_spread *= 2

self.model.prob_neighbor_spread = min(self.model.prob_neighbor_spread, 1)

await self.delay()

self.model.prob_tv_spread = 0

while self.alive:

self.model.prob_neighbor_spread = self.model.prob_neighbor_spread * self.model.neighbor_factor

if self.model.prob_neighbor_spread < 0.01:

return self.die("neighbors can no longer spread the rumour")

await self.delay()

Environment (Model)¶

Let’s define a environment model to test our event generator agent. This environment will have a single agent (the event generator). We will also tell the environment to save the value of prob_tv_spread after every step:

[66]:

class NewsEnvSimple(NetworkEnvironment):

# Here we set the default parameters for our model

# We will be able to override them on a per-simulation basis

prob_tv_spread = 0.1

prob_neighbor_spread = 0.1

event_time = 10

neighbor_factor = 0.9

# This function initializes the model. It is run right at the end of the `__init__` function.

def init(self):

self.add_model_reporter("prob_tv_spread")

self.add_agent(EventGenerator)

Once the environment has been defined, we can quickly run our simulation through the run method on NewsEnv:

[67]:

it = NewsEnvSimple.run(iterations=1, max_time=14)

it[0].model_df()

[67]:

| step | agent_count | prob_tv_spread | |

|---|---|---|---|

| time | |||

| 0.0 | 0 | 1 | 0.1 |

| 10.0 | 1 | 1 | 0.1 |

| 11.0 | 2 | 1 | 0.5 |

| 12.0 | 3 | 1 | 0.0 |

| 13.0 | 4 | 1 | 0.0 |

| 14.0 | 5 | 1 | 0.0 |

As we can see, the event occurred right after t=10, so by t=11 the value of prob_tv_spread was already set to 0.5.

You may notice nothing happened between t=0 and t=1. That is because there aren’t any other agents in the simulation, and our event generator explicitly waited until t=10.

Network agents¶

In our disinformation scenario, we will model our agents as a FSM with two states: neutral (default) and infected.

Here’s the code:

[68]:

class NewsSpread(Agent):

has_tv = False

infected_by_friends = False

# The state decorator is used to define the states of the agent

@state(default=True)

def neutral(self):

# The agent might have been infected by their infected friends since the last time they were checked

if self.infected_by_friends:

# Automatically transition to the infected state

return self.infected

# If the agent has a TV, they might be infected by the evenn

if self.has_tv:

if self.prob(self.model.prob_tv_spread):

return self.infected

@state

def infected(self):

for neighbor in self.iter_neighbors(state_id=self.neutral.id):

if self.prob(self.model.prob_neighbor_spread):

neighbor.infected_by_friends = True

return self.delay(7) # Wait for 7 days before trying to infect their friends again

We can check that our states are well defined:

[69]:

NewsSpread.states()

[69]:

['dead', 'neutral', 'infected']

Environment (Model)¶

Let’s modify our simple simulation. We will add a network of agents of type NewsSpread.

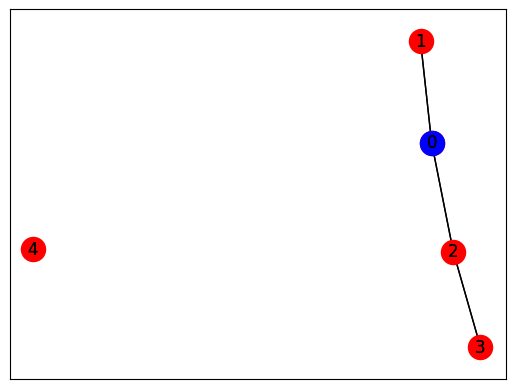

Only one agent (0) will have a TV (in blue).

[70]:

def generate_simple():

G = nx.Graph()

G.add_edge(0, 1)

G.add_edge(0, 2)

G.add_edge(2, 3)

G.add_node(4)

return G

G = generate_simple()

pos = nx.spring_layout(G)

nx.draw_networkx(G, pos, node_color='red')

nx.draw_networkx(G, pos, nodelist=[0], node_color='blue')

[71]:

class NewsEnvNetwork(Environment):

prob_tv_spread = 0

prob_neighbor_spread = 0.1

event_time = 10

neighbor_factor = 0.9

def init(self):

self.add_agent(EventGenerator)

self.G = generate_simple()

self.populate_network(NewsSpread)

self.agent(node_id=0).has_tv = True

self.add_model_reporter('prob_tv_spread')

self.add_model_reporter('prob_neighbor_spread')

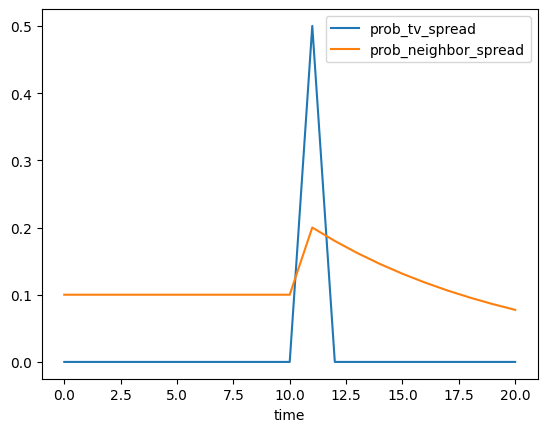

[72]:

it = NewsEnvNetwork.run(max_time=20)

it[0].model_df()

[72]:

| step | agent_count | prob_tv_spread | prob_neighbor_spread | |

|---|---|---|---|---|

| time | ||||

| 0.0 | 0 | 6 | 0.0 | 0.100000 |

| 1.0 | 1 | 6 | 0.0 | 0.100000 |

| 2.0 | 2 | 6 | 0.0 | 0.100000 |

| 3.0 | 3 | 6 | 0.0 | 0.100000 |

| 4.0 | 4 | 6 | 0.0 | 0.100000 |

| 5.0 | 5 | 6 | 0.0 | 0.100000 |

| 6.0 | 6 | 6 | 0.0 | 0.100000 |

| 7.0 | 7 | 6 | 0.0 | 0.100000 |

| 8.0 | 8 | 6 | 0.0 | 0.100000 |

| 9.0 | 9 | 6 | 0.0 | 0.100000 |

| 10.0 | 10 | 6 | 0.0 | 0.100000 |

| 11.0 | 11 | 6 | 0.5 | 0.200000 |

| 12.0 | 12 | 6 | 0.0 | 0.180000 |

| 13.0 | 13 | 6 | 0.0 | 0.162000 |

| 14.0 | 14 | 6 | 0.0 | 0.145800 |

| 15.0 | 15 | 6 | 0.0 | 0.131220 |

| 16.0 | 16 | 6 | 0.0 | 0.118098 |

| 17.0 | 17 | 6 | 0.0 | 0.106288 |

| 18.0 | 18 | 6 | 0.0 | 0.095659 |

| 19.0 | 19 | 6 | 0.0 | 0.086093 |

| 20.0 | 20 | 6 | 0.0 | 0.077484 |

In this case, notice that the inclusion of other agents (which run every step) means that the simulation did not skip to t=10.

Now, let’s look at the state of our agents in every step:

[73]:

analysis.plot(it[0])

No agent dataframe provided and no agent reporters found. Skipping agent plot.

Running in more scenarios¶

In real life, you probably want to run several simulations, varying some of the parameters so that you can compare and answer your research questions.

For instance:

- Does the outcome depend on the structure of our network? We will use different generation algorithms to compare them (Barabasi-Albert and Erdos-Renyi)

- How does neighbor spreading probability affect my simulation? We will try probability values in the range of [0, 0.4], in intervals of 0.1.

[74]:

class NewsEnvComplete(Environment):

prob_tv = 0.1

prob_tv_spread = 0

prob_neighbor_spread = 0.1

event_time = 10

neighbor_factor = 0.5

generator = "erdos_renyi_graph"

n = 100

def init(self):

self.add_agent(EventGenerator)

opts = {"n": self.n}

if self.generator == "erdos_renyi_graph":

opts["p"] = 0.05

elif self.generator == "barabasi_albert_graph":

opts["m"] = 2

self.create_network(generator=self.generator, **opts)

self.populate_network([NewsSpread,

NewsSpread.w(has_tv=True)],

[1-self.prob_tv, self.prob_tv])

self.add_model_reporter('prob_tv_spread')

self.add_model_reporter('prob_neighbor_spread')

self.add_agent_reporter('state_id', lambda a: getattr(a, "state_id", None))

This time, we set dump=True because we want to store our results to a database, so that we can later analyze them.

But since we do not care about existing results in the database, we will also setoverwrite=True.

[75]:

N = 100

probabilities = [0, 0.25, 0.5, 0.75, 1.0]

generators = ["erdos_renyi_graph", "barabasi_albert_graph"]

it = NewsEnvComplete.run(name=f"newspread",

iterations=5, max_time=30, dump=True, overwrite=True,

matrix=dict(n=[N], generator=generators, prob_neighbor_spread=probabilities))

[INFO ][12:09:51] Output directory: /mnt/data/home/j/git/lab.gsi/soil/soil/docs/tutorial/soil_output

n = 100

generator = erdos_renyi_graph

prob_neighbor_spread = 0

n = 100

generator = erdos_renyi_graph

prob_neighbor_spread = 0.25

n = 100

generator = erdos_renyi_graph

prob_neighbor_spread = 0.5

n = 100

generator = erdos_renyi_graph

prob_neighbor_spread = 0.75

n = 100

generator = erdos_renyi_graph

prob_neighbor_spread = 1.0

n = 100

generator = barabasi_albert_graph

prob_neighbor_spread = 0

n = 100

generator = barabasi_albert_graph

prob_neighbor_spread = 0.25

n = 100

generator = barabasi_albert_graph

prob_neighbor_spread = 0.5

n = 100

generator = barabasi_albert_graph

prob_neighbor_spread = 0.75

n = 100

generator = barabasi_albert_graph

prob_neighbor_spread = 1.0

[76]:

DEFAULT_ITERATIONS = 5

assert len(it) == len(probabilities) * len(generators) * DEFAULT_ITERATIONS

The results are conveniently stored in sqlite (history of agent and environment state) and the configuration is saved in a YAML file.

You can also export the results to GEXF format (dynamic network) and CSV using .run(dump=['gexf', 'csv']) or the command line flags --graph --csv.

[77]:

!tree soil_output

!du -xh soil_output/*

soil_output

└── newspread

├── newspread_1683108591.8199563.dumped.yml

└── newspread.sqlite

1 directory, 2 files

21M soil_output/newspread

Analysing the results¶

Loading data¶

Once the simulations are over, we can use soil to analyse the results.

There are two main ways: directly using the iterations returned by the run method, or loading up data from the results database. This is particularly useful to store data between sessions, and to accumulate results over multiple runs.

The mainThe main method to load data from the database is read_sql, which can be used in two ways:

analysis.read_sql(<sqlite_file>)to load all the results from a sqlite database . e.g.read_sql('my_simulation/file.db.sqlite')analysis.read_sql(name=<simulation name>)will look for the default path for a simulation named<simulation name>

The result in both cases is a named tuple with four dataframes:

configuration, which contains configuration parameters per simulationparameters, which shows the parameters used in every iteration of every simulationenv, with the data collected from the model in each iteration (as specified inmodel_reporters)agents, likeenv, but foragent_reporters

Let’s see it in action by loading the stored results into a pandas dataframe:

[78]:

res = analysis.read_sql(name="newspread", include_agents=True)

Plotting data¶

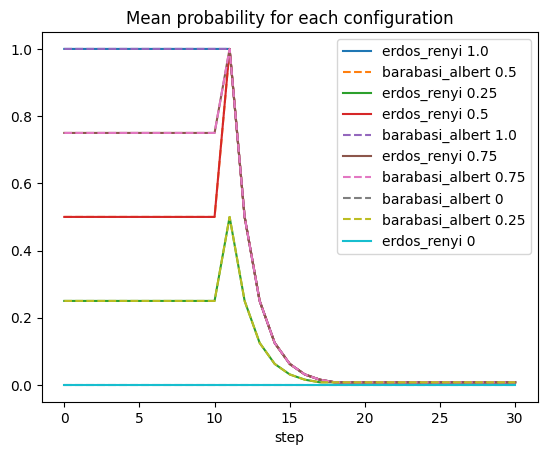

Once we have loaded the results from the file, we can use them just like any other dataframe.

Here is an example of plotting the ratio of infected users in each of our simulations:

[79]:

for (g, group) in res.env.dropna().groupby("params_id"):

params = res.parameters.query(f'params_id == "{g}"').iloc[0]

title = f"{params.generator.rstrip('_graph')} {params.prob_neighbor_spread}"

prob = group.groupby(by=["step"]).prob_neighbor_spread.mean()

line = "-"

if "barabasi" in params.generator:

line = "--"

prob.rename(title).fillna(0).plot(linestyle=line)

plt.title("Mean probability for each configuration")

plt.legend();

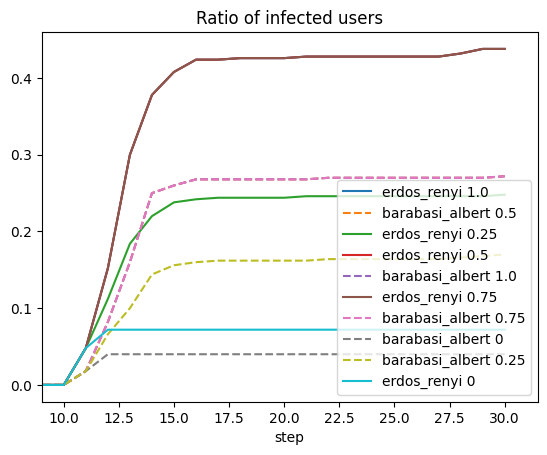

[80]:

for (g, group) in res.agents.dropna().groupby("params_id"):

params = res.parameters.query(f'params_id == "{g}"').iloc[0]

title = f"{params.generator.rstrip('_graph')} {params.prob_neighbor_spread}"

counts = group.groupby(by=["step", "state_id"]).value_counts().unstack()

line = "-"

if "barabasi" in params.generator:

line = "--"

(counts.infected/counts.sum(axis=1)).rename(title).fillna(0).plot(linestyle=line)

plt.legend()

plt.xlim([9, None]);

plt.title("Ratio of infected users");

Data format¶

Parameters¶

The parameters dataframe has three keys:

- The identifier of the simulation. This will be shared by all iterations launched in the same run

- The identifier of the parameters used in the simulation. This will be shared by all iterations that have the exact same set of parameters.

- The identifier of the iteration. Each row should have a different iteration identifier

There will be a column per each parameter passed to the environment. In this case, that’s three: generator, n and prob_neighbor_spread.

[81]:

res.parameters.head()

[81]:

| key | generator | n | prob_neighbor_spread | ||

|---|---|---|---|---|---|

| iteration_id | params_id | simulation_id | |||

| 0 | 39063f8 | newspread_1683108591.8199563 | erdos_renyi_graph | 100 | 1.0 |

| 8f26adb | newspread_1683108591.8199563 | barabasi_albert_graph | 100 | 0.5 | |

| 92fdcb9 | newspread_1683108591.8199563 | erdos_renyi_graph | 100 | 0.25 | |

| cb3dbca | newspread_1683108591.8199563 | erdos_renyi_graph | 100 | 0.5 | |

| d1fe9c1 | newspread_1683108591.8199563 | barabasi_albert_graph | 100 | 1.0 |

Configuration¶

This dataset is indexed by the identifier of the simulation, and there will be a column per each attribute of the simulation. For instance, there is one for the number of processes used, another one for the path where the results were stored, etc.

[82]:

res.config.head()

[82]:

| index | version | source_file | name | description | group | backup | overwrite | dry_run | dump | ... | num_processes | exporters | model_reporters | agent_reporters | tables | outdir | exporter_params | level | skip_test | debug | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| simulation_id | |||||||||||||||||||||

| newspread_1683108591.8199563 | 0 | 2 | None | newspread | None | False | True | False | True | ... | 1 | ["<class 'soil.exporters.default'>"] | {} | {} | {} | /mnt/data/home/j/git/lab.gsi/soil/soil/docs/tu... | {} | 20 | False | False |

1 rows × 28 columns

Model reporters¶

The env dataframe includes the data collected from the model. The keys in this case are the same as parameters, and an additional one: step.

[83]:

res.env.head()

[83]:

| agent_count | time | prob_tv_spread | prob_neighbor_spread | ||||

|---|---|---|---|---|---|---|---|

| simulation_id | params_id | iteration_id | step | ||||

| newspread_1683108591.8199563 | ff1d24a | 0 | 0 | 101 | 0.0 | 0.0 | 0.0 |

| 1 | 101 | 1.0 | 0.0 | 0.0 | |||

| 2 | 101 | 2.0 | 0.0 | 0.0 | |||

| 3 | 101 | 3.0 | 0.0 | 0.0 | |||

| 4 | 101 | 4.0 | 0.0 | 0.0 |

Agent reporters¶

This dataframe reflects the data collected for all the agents in the simulation, in every step where data collection was invoked.

The key in this dataframe is similar to the one in the parameters dataframe, but there will be two more keys: the step and the agent_id. There will be a column per each agent reporter added to the model. In our case, there is only one: state_id.

[84]:

res.agents.head()

[84]:

| state_id | |||||

|---|---|---|---|---|---|

| simulation_id | params_id | iteration_id | step | agent_id | |

| newspread_1683108591.8199563 | ff1d24a | 0 | 0 | 0 | None |

| 1 | neutral | ||||

| 2 | neutral | ||||

| 3 | neutral | ||||

| 4 | neutral |